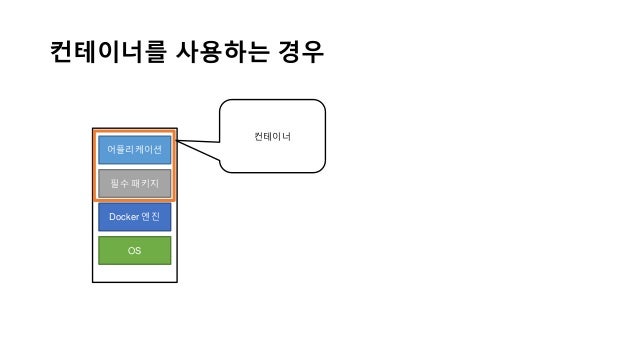

Moreover, it is much easier to build and deploy, by using the official docker image as the base. This talk is aimed for Airflow users who would like to make use of all the effort. Tasks in Kubernetes pod are independent with each other, in an environment separated from Airflow. There are many different ways to deploy an Airflow cluster, from a simple installation with CeleryExecutor to Dockerize deployment. I'm running a task using a KubernetesPodOperator, with inclusterTrue parameters, and it runs well, I can even kubectl logs pod-name and all the logs show up. The scheduler itself does not necessarily need to be running on Kubernetes, but does need access to a Kubernetes cluster. My airflow service runs as a kubernetes deployment, and has two containers, one for the webserver and one for the scheduler. Docker docker-compose airflow airflow-docker containers apache-airflow. KubernetesExecutor runs as a process in the Airflow Scheduler. Starting from official container image, through quick-start docker-compose configuration, culminating in April with release of the official Helm Chart for Airflow. Https A docker image and kubernetes config files to run Airflow on Kubernetes. Over the last year community members made an enormous effort to provide robust, simple and versatile support for those deployments that would respond to all kinds of Airflow users. The full support for Kubernetes deployments was developed by the community for quite a while and in the past users of Airflow had to rely on 3rd-party images and helm-charts to run Airflow on Kubernetes. GKEStartPodOperator and KubernetesPodOperator are implemented within apache-airflow-providers-cncf-kubernetes provider. Developers Getting Started Play with Docker Community Open Source Documentation. It helps to create a new context that is able to run any. Products Product Overview Product Offerings Docker Desktop Docker Hub Features Container Runtime Developer Tools Docker App Kubernetes. Note: If airflow is run in dev environment (locally instead of kubernetes) it all works perfectly.In this talk Jarek and Kaxil will talk about official, community support for running Airflow in the Kubernetes environment. This operator uses the Kubernetes Pod object to decouple the workflow logic from the Airflow context. If I kubectl exec bash into the container, I can curl localhost on port 8080, but not on. This repository contains: Dockerfile (.template) of airflow for Docker images published to the public Docker Hub Registry. github/ workflows Create ci.yml 4 years ago config Bump to Airflow 1.10.8 4 years ago dags Bump to 1.9. Far from being the ultimate set-up, these are some settings that worked for me using the docker-compose from Airflow in the core node and the workers: Main. airflow connections get .The easiest way to get the URI is create the connection in the Webserver UI in the normal way and then after, SSH into the running webserver container and execute the command. K8s runs airflow as a docker container.When you are spinning up the container you need to run it as airflow user. It seems as the pod is unable to connect to the airflow logging service, on port 8793. / docker-airflow master 1 branch 39 tags Code puckel Debian base image - Move from 3.7-slim-stretch to 3.7-slim-buster bed7779 on 224 commits. Airflow supports setting connections via URI only in the helm chart. HTTPConnectionPool(host='pod-name-7dffbdf877-6mhrn', port=8793): Max retries exceeded with url: /log/dag_name/task_name/T23:17:33.455051+00:00/1.log (Caused by NewConnectionError(': Failed to establish a new connection: Connection refused')) *** Fetching from: *** Failed to fetch log file from worker.  However, the airflow-webserver is unable to fetch the logs: *** Log file does not exist: /tmp/logs/dag_name/task_name/T23:17:33.455051+00:00/1.log I'm running a task using a KubernetesPodOperator, with in_cluster=True parameters, and it runs well, I can even kubectl logs pod-name and all the logs show up. My airflow service runs as a kubernetes deployment, and has two containers, one for the webserver and one for the scheduler.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed